March 25, 2026

Product Release

Native, Structured and Long-term Memory Plugin for OpenClaw: From 30k+ Memory Skill Downloads to a Native Plugin

TL;DR

Native OpenClaw Integration: Plugs directly into prompt assembly via ClawHub, autonamically manages your memory with a Three-Layer Memory architecture for your agent

Context Tree: Deep, structured Knowledge Base

Workspace Memory: Core rules, preferences, and guidelines

Daily Memory: Session Notes

Git-like Stateful Memory: Organizes memory into a semantic hierarchy of human-readable, diffable, and git-friendly Markdown files.

Local-first but Fully Portable: Local-by-default, fully portable for multi-agent teams, and installs via a single safe

curlscript.Top Market Accuracy: Achieves an industry-leading 92.2% retrieval accuracy (on both LoCoMo and LongMemEval benchmark), maintain 90% accuracy even with cheap & lightweight model

Check the doc:

Set up in seconds:

Individuals and companies adopting OpenClaw for automation soon realize memory is a huge bottleneck. To make an agent a truly 24/7 employee capable of complex workflows, the technical setup isn’t the hardest part. The real challenge is giving it a "brain" that remembers exact project details, past decisions, and the changes made by all teammates over time.

The first version of our memory system in OpenClaw was a massive success: 30k+ downloads within a week and 500k+ organic impressions overnight for the launch post on X. That shows exactly how much OpenClaw power users crave a truly long-term and stateful memory for their agents, and ByteRover solves exactly that.

But we know memory can be much better by being more native. That is why we decided to go beyond the skill layer. The next step was building a deeper integration that feels like part of the agent’s actual context assembly flow.

The Big News: Native Memory Plugin for OpenClaw

Today, we're excited to share an important milestone in that direction: OpenClaw has merged the interface change that enables memory plugins to deliver a native, stateful context engine experience.

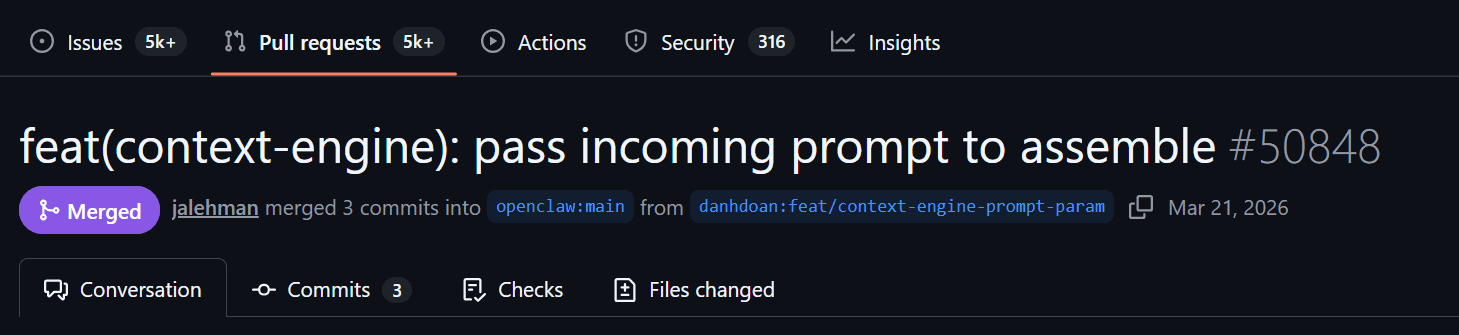

On March 21, 2026, OpenClaw merged PR #50848, which adds an optional prompt parameter to ContextEngine.assemble(). This allows retrieval-oriented engines to actually use the current user query when assembling context.

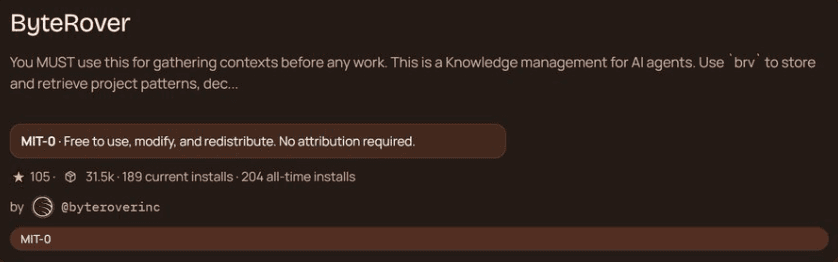

With this foundation in place, our ByteRover memory plugin is now live on NPM - ready to install and bring persistent, queryable context to your OpenClaw agents.

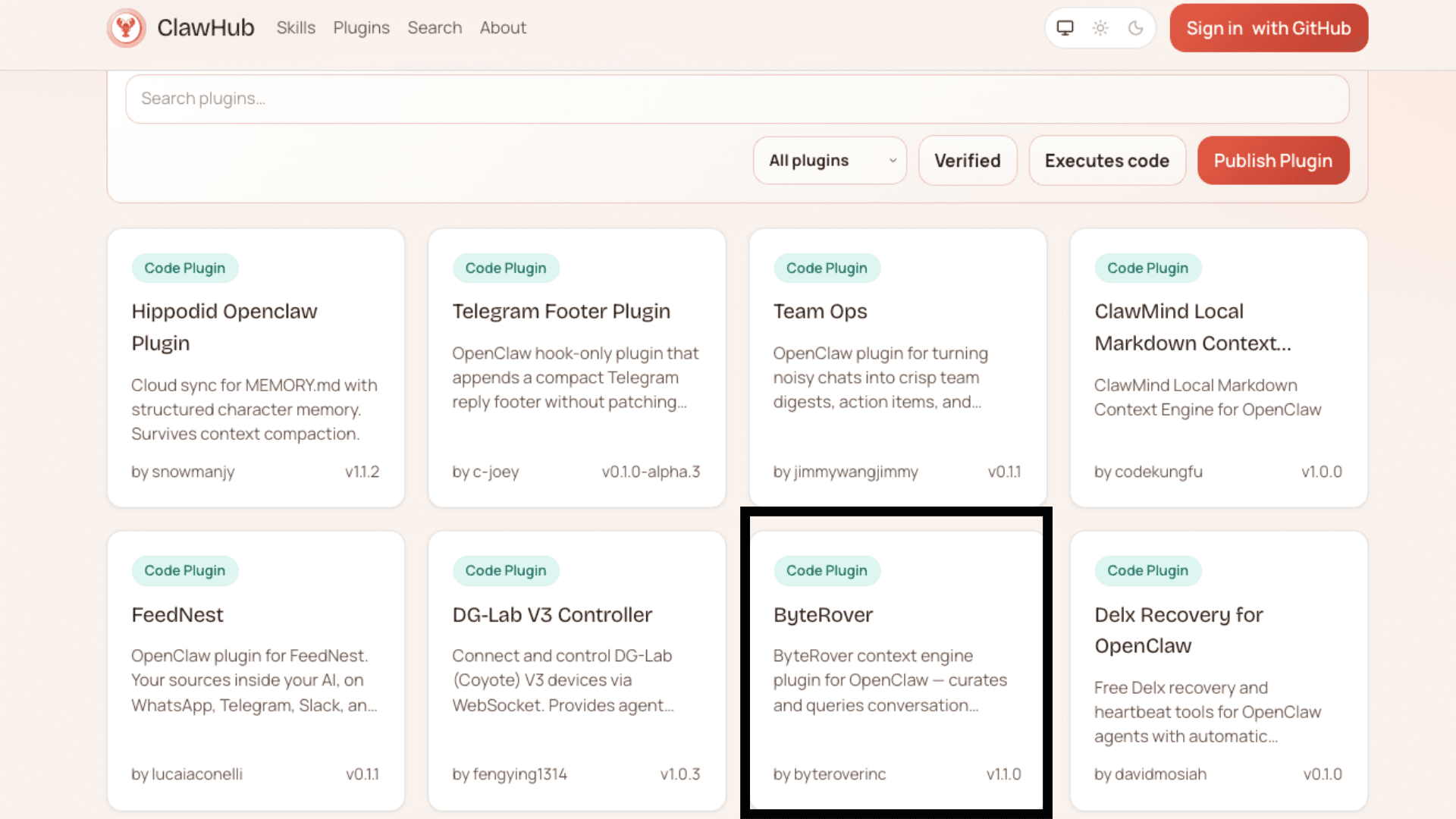

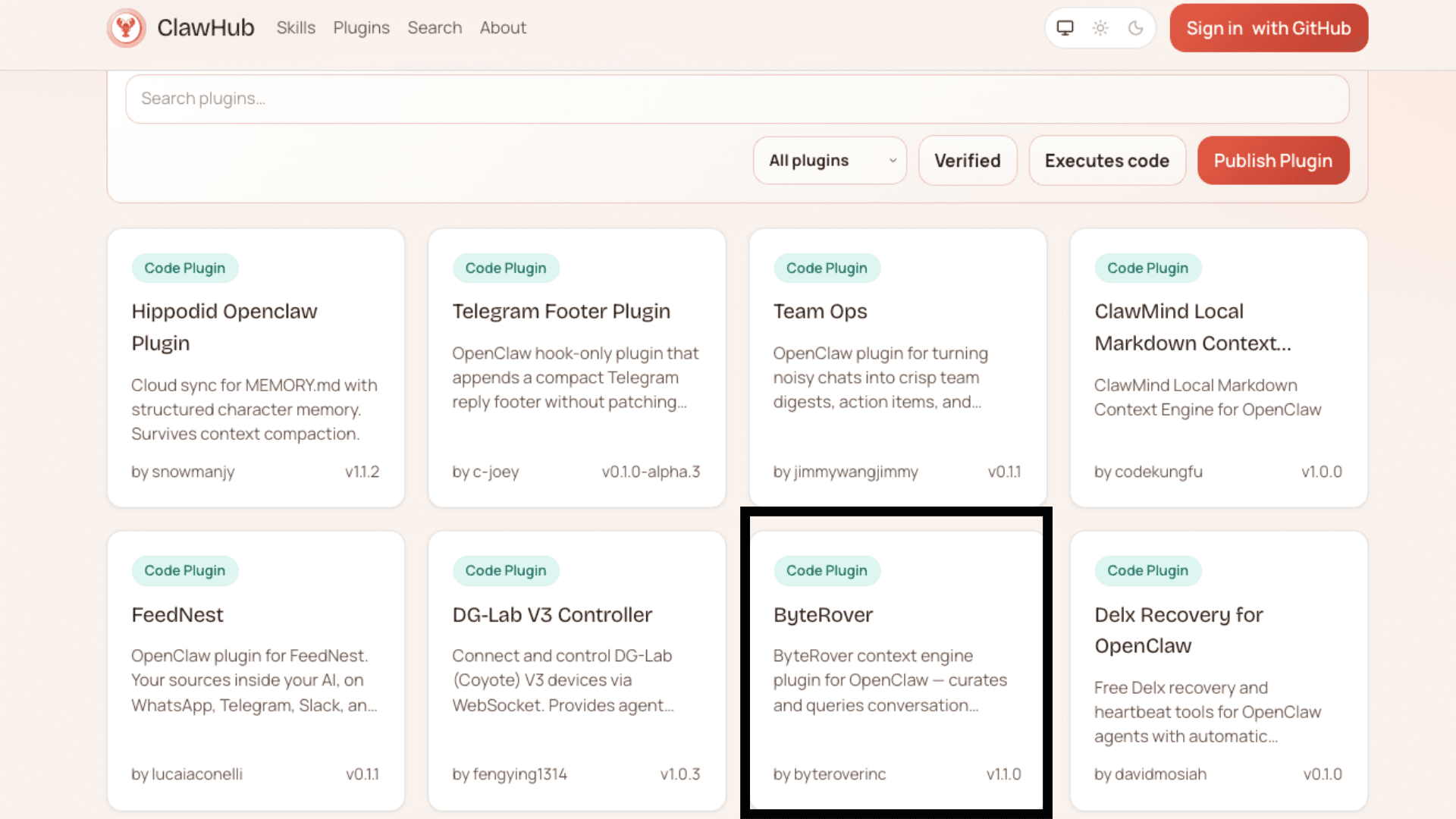

ByteRover Plugin is also available on ClawHub now

Right Context at Right Time: The 24/7 Memory Automation Loop

This plugin is a massive step forward because it doesn't just pull old memories into your prompt - it introduces a complete, native OpenClaw memory loop that works around the clock:

1. The Context Engine (Real-Time Learning):

Before each model runs (Assemble): It automatically injects the exact curated knowledge your agent needs directly into the system prompt.

After each run completes (After Turn): It instantly evaluates the turn and saves new decisions back to your memory tree.

The Best Part: It delegates memory compaction to OpenClaw (

ownsCompaction: false), so it plays perfectly with your native runtime without breaking anything.

2. Automatic Memory Flush (The Safety Net): It actively monitors your agent's context window. Right before the token limit fills up and forces a compaction, ByteRover automatically steps in to extract and save the most valuable insights from the current session so nothing gets lost.

3. Daily Knowledge Mining (The Morning Review): Think of this as an automated daily debrief. Every morning at 9:00 AM, it triggers a native OpenClaw cron job to sweep through yesterday's memory logs, extracting and storing big-picture architectural decisions and patterns.

Top-Market Accuracy, Zero Babysitting

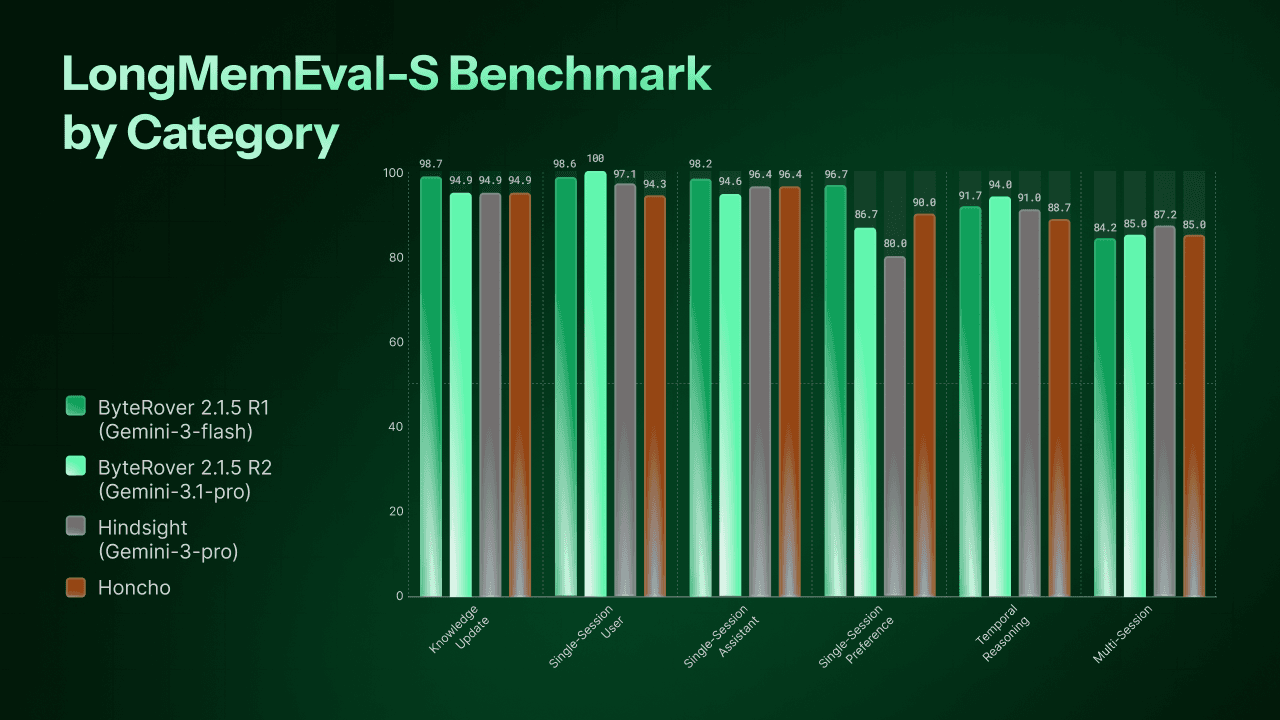

By moving from a flat file search to a structured, LLM-curated Context Tree, the results speak for themselves (>92% accuracy on LoCoMo and LongMemEval benchmarks). For end users, this means:

→ Check out the Locomo Benchmark

Instant answers, no digging: Surfaces the precise knowledge your agent needs instantly (>92% precision).

Real-time adaptation: When you update the knowledge mid-project, the agent updates its understanding instantly without clinging to outdated facts (99% Knowledge Update).

Context across conversations: It connects the dots between entirely different chat sessions - solving one of the hardest memory problems in real workflows (84% Multi-Session recall).

Cheaper, faster models: Because the retrieval architecture does the heavy lifting, you can use cheaper, faster models to beat out the expensive frontier models.

Don't Lose Past History

For the 30k+ users who downloaded the Memory Skill, there are likely gigabytes of messy, flat daily markdown logs sitting in storage. There is no need to start over. A single curation command run on an existing memory/ directory instantly converts months of flat notes into a perfectly organized Context Tree.

Local First, Team-Ready

Memory stays entirely local by default - no cloud required, no accounts needed. But when it’s time to collaborate, the Context Tree can easily be pushed to the cloud. Teams can share a single memory space across multiple agents, complete with git-like version control, diffs, and rollbacks.

Super Easy Setup

Quick note: OpenClaw v2026.3.22+ is required

If you're upgrading from an earlier version, remove the plugins.allow field from your openclaw.json and restart the gateway - this field is no longer needed and may interfere with newer OpenClaw releases.

The complete automation loop installs via a single interactive script that safely backs up existing configurations and sets everything up in seconds:

Or install the plugin only:

Check the doc: